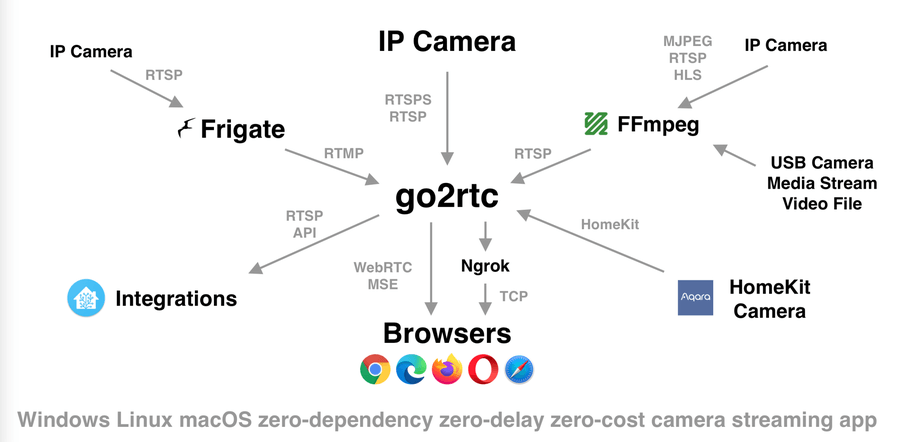

Go2rtc is a lightweight real-time communication proxy that supports multiple streaming protocols such as RTSP, WebRTC, HLS, and RTMP. It is widely adopted by smart home users, especially those using platforms like Home Assistant. Its versatility allows seamless bridging between different camera systems and viewing interfaces.

As with any real-time streaming system, performance bottlenecks can affect stream quality, latency, stability, and CPU/GPU usage. Identifying and resolving these issues ensures optimal operation and a better viewing experience. Whether integrating Go2rtc into a home automation setup or using it as a standalone streaming bridge, maintaining efficiency is key to reliability.

CPU Saturation from Transcoding Operations

Transcoding is one of the most resource-intensive tasks in video processing. Go2rtc supports transcoding to adapt video streams for compatibility with target protocols like WebRTC or HLS. However, real-time transcoding puts significant strain on the CPU, especially if multiple high-resolution streams are handled simultaneously.

Excessive CPU usage can lead to delayed frames, stream freezing, or total service unresponsiveness. Systems running on single-board computers or low-power servers may encounter bottlenecks when trying to transcode 1080p or 4K streams.

Mitigation involves offloading transcoding tasks to hardware encoders where possible. Leveraging Intel Quick Sync Video (QSV), NVIDIA NVENC, or AMD VCE can greatly reduce CPU load. Another strategy is to avoid transcoding altogether by ensuring source cameras deliver compatible codecs like H.264 in baseline or main profile.

High Memory Usage Due to Multiple Streams

Handling multiple camera streams or output protocols simultaneously consumes memory. Each stream buffer and protocol-specific transformation increases RAM consumption. When memory becomes saturated, systems may begin swapping or crashing.

Memory bottlenecks are more common in systems running Dockerized Go2rtc with high-resolution RTSP sources or long stream buffers. Unoptimized containers or configurations that keep excessive buffer histories also contribute to this issue.

Reducing the number of simultaneously active output streams is effective in conserving memory. Lowering buffer durations in the configuration file and using direct passthrough where possible also limits memory strain. Monitoring memory consumption using tools like htop or Docker stats helps detect early signs of overload.

Network Bandwidth Limitations Affecting Real-Time Performance

Live video streaming relies heavily on stable and fast network connections. A common bottleneck occurs when the available upload or download bandwidth is insufficient for high-bitrate streams. This issue becomes more pronounced when Go2rtc serves multiple clients concurrently or proxies streams to remote interfaces.

Symptoms include choppy video, desynchronization between audio and video, or long loading times for WebRTC or HLS streams. Wireless connections with variable signal strength or saturation can worsen these issues.

Placing the Go2rtc server on a wired Ethernet connection improves consistency. Stream bitrate and resolution should be reduced for remote access scenarios. Users with limited network infrastructure may consider utilizing adaptive bitrate streaming (ABR) if available.

Latency from Protocol Conversion Overhead

Protocol bridging is a core feature of Go2rtc, enabling translation from RTSP to WebRTC, HLS, or others. However, converting between protocols introduces latency. WebRTC expects ultra-low-latency frames, whereas RTSP can tolerate delays. Similarly, HLS involves chunking and buffering, increasing delay.

These conversions create a delay mismatch, particularly noticeable in security applications or when monitoring real-time environments. Transcoding during conversion amplifies this latency further.

Minimizing protocol conversion improves latency. Where possible, using protocols that do not require format changes—such as passing through an RTSP stream directly—enhances real-time performance. Selecting the lowest latency profile in WebRTC settings and tuning HLS chunk sizes also reduces delay.

Disk I/O Contention During Stream Recording

Some Go2rtc setups include video recording to local storage, typically using a media server or third-party integration. Writing multiple high-bitrate streams simultaneously stresses the disk, especially when using spinning drives or SD cards in embedded systems.

Disk I/O contention can stall stream writes, corrupt video segments, or slow down the entire system. Flash-based storage also wears out more quickly under sustained write conditions.

Recording should be directed to fast SSDs or network storage with appropriate write speeds. If storage limitations exist, reducing video resolution and disabling audio can lower the data footprint. File rotation and periodic cleanups prevent unnecessary bloat and maintain performance.

Improper Camera Stream Configuration

Incorrect or suboptimal RTSP stream configurations on the camera side directly affect Go2rtc performance. Cameras may default to high-bitrate or high-resolution modes, resulting in excessive resource use when processed by Go2rtc.

Moreover, some cameras expose multiple substreams but lack proper documentation, leading users to select inefficient or unsupported URLs. Streams with variable frame rates or unusual encoding profiles complicate transcoding.

Verifying camera settings before integration prevents these issues. Choosing a stream with a stable resolution (e.g., 1280×720) and codec (H.264 baseline) optimizes compatibility. Disabling extra camera features like motion overlays or audio channels can also reduce decoding complexity.

WebRTC Session Load from Multiple Clients

WebRTC is ideal for low-latency streaming, but it requires complex peer-to-peer signaling and ICE negotiation. When many users access the same WebRTC stream simultaneously, the server must maintain multiple session states and potentially relay traffic through TURN servers.

Session overload increases CPU usage and impacts responsiveness. Client disconnections may occur unexpectedly, or video quality may degrade significantly during concurrent access.

Load balancing WebRTC connections or limiting concurrent viewers is effective in preserving performance. Where possible, defaulting non-critical viewers to HLS or MPEG-DASH reduces WebRTC stress. Ensuring the use of STUN instead of TURN for local clients also lightens the server’s relay workload.

Inadequate Container or Host Resource Allocation

Docker containers often encapsulate Go2rtc in smart home environments. If container resource limits are not configured correctly, Go2rtc may run with insufficient CPU or memory, even when host hardware has available capacity.

This bottleneck leads to unpredictable behavior, including stalled streams, timeout errors, or container crashes. Similar issues arise when the host operating system restricts access to hardware acceleration resources.

Adjusting container configuration to allow higher CPU shares and memory limits ensures smoother operation. Binding the container to the appropriate host devices, such as GPUs or video acceleration modules, improves transcoding efficiency. Monitoring container logs and adjusting cgroups parameters help identify and resolve these limitations.

TLS/SSL Overhead on Encrypted Connections

When Go2rtc streams are served over HTTPS or secure WebRTC (WSS), encryption overhead adds computational load. Each connection requires handshake negotiation, encryption, and decryption processes. On low-powered systems, the cumulative effect of these secure sessions impacts performance.

Though essential for remote access security, TLS overhead can become significant, particularly during peak usage or with multiple endpoints.

Implementing TLS offloading via reverse proxies like Nginx or Caddy offloads encryption from Go2rtc itself. These proxies can cache sessions and reduce handshake repetition. On LAN-only setups, disabling HTTPS may be acceptable if local security policies permit it.

Buffer Bloat Due to Large Stream Queues

Go2rtc uses internal buffers to manage frame timing and delivery across protocols. Overly large buffers delay stream availability and amplify latency. When memory becomes scarce, these buffers may overflow or be purged abruptly, causing stream jitter.

Such issues are common when default buffer settings are left unoptimized or when streams are consumed slowly by clients.

Tuning buffer size settings in the go2rtc.yaml file prevents this issue. Using protocol-specific flags to define read/write timeout, jitter buffer, or prebuffer lengths helps maintain consistency. A balance must be achieved between latency and jitter tolerance.

Conflicts from Concurrent Software Usage

Running Go2rtc alongside other camera or media-related services, such as Frigate, MotionEye, or VLC, can lead to stream conflicts. Simultaneous access to the same RTSP feed results in dropped connections or stream corruption if the camera does not support multiple connections.

Such contention leads to intermittent availability or incomplete frames in Go2rtc outputs.

Sharing camera feeds through Go2rtc rather than having each service connect directly eliminates this issue. Go2rtc can act as the single consumer from the camera and rebroadcast internally using RTSP or WebRTC to other services. This architecture centralizes stream handling and minimizes device overload.

Version Incompatibility with Dependencies

As Go2rtc evolves rapidly, changes in dependencies such as FFmpeg or WebRTC libraries can introduce compatibility issues. Running outdated versions may miss performance improvements, while using unstable beta versions can lead to regressions.

Some users experience degraded performance after updates due to configuration mismatches or deprecated flags.

Regularly updating Go2rtc to stable releases ensures bug fixes and performance patches are applied. Reviewing changelogs before upgrading and testing in staging environments prevents production impact. When used with Home Assistant add-ons, waiting for validated community updates enhances stability.

FAQs

How much CPU is typically required to run Go2rtc with three 1080p RTSP streams?

A modern quad-core CPU is usually sufficient for direct passthrough. If transcoding is needed, hardware acceleration is recommended to maintain performance without overloading the processor.

Does Go2rtc support hardware acceleration for video transcoding?

Yes, Go2rtc can utilize hardware encoders like Intel Quick Sync, NVIDIA NVENC, or AMD VCE when configured correctly in FFmpeg or Docker.

Is it better to use WebRTC or HLS for low-latency streaming?

WebRTC is optimized for low-latency real-time communication but uses more resources. HLS introduces higher latency due to chunking but scales better for multiple viewers.

Can Go2rtc be run on a Raspberry Pi?

Yes, but resource limitations may restrict performance. Direct stream passthrough is recommended, and transcoding should be avoided on such hardware.

How can I monitor Go2rtc resource usage in real-time?

Tools like htop, docker stats, or Home Assistant system monitor integrations provide real-time insights into CPU, memory, and network usage.

Conclusion

Go2rtc is a powerful and flexible tool for real-time stream management, but it is not immune to performance limitations. From CPU-heavy transcoding to memory saturation, buffer overflow, and protocol conversion latency, a wide range of factors can affect its efficiency. Proper configuration and strategic resource allocation are critical to sustaining optimal performance.

Understanding how each component—from stream source to protocol output—interacts with Go2rtc enables users to detect bottlenecks early. Once identified, targeted mitigation strategies improve responsiveness, reduce system load, and enhance reliability.

Using best practices, such as minimizing transcoding, reducing buffer sizes, leveraging hardware acceleration, and isolating workloads in containers, significantly improves the overall experience. Whether deployed for a few smart cameras or an advanced multi-protocol surveillance setup, Go2rtc performs at its best when systems are configured thoughtfully and monitored consistently.